System Architecture

This page describes the structural model and configurable components of FanRows.

FanRows is implemented as a modular framework for embodied audio interaction. The architecture separates motion analysis, state regulation, audio control, and configuration in order to enable reproducible experimentation with continuous body–sound coupling.

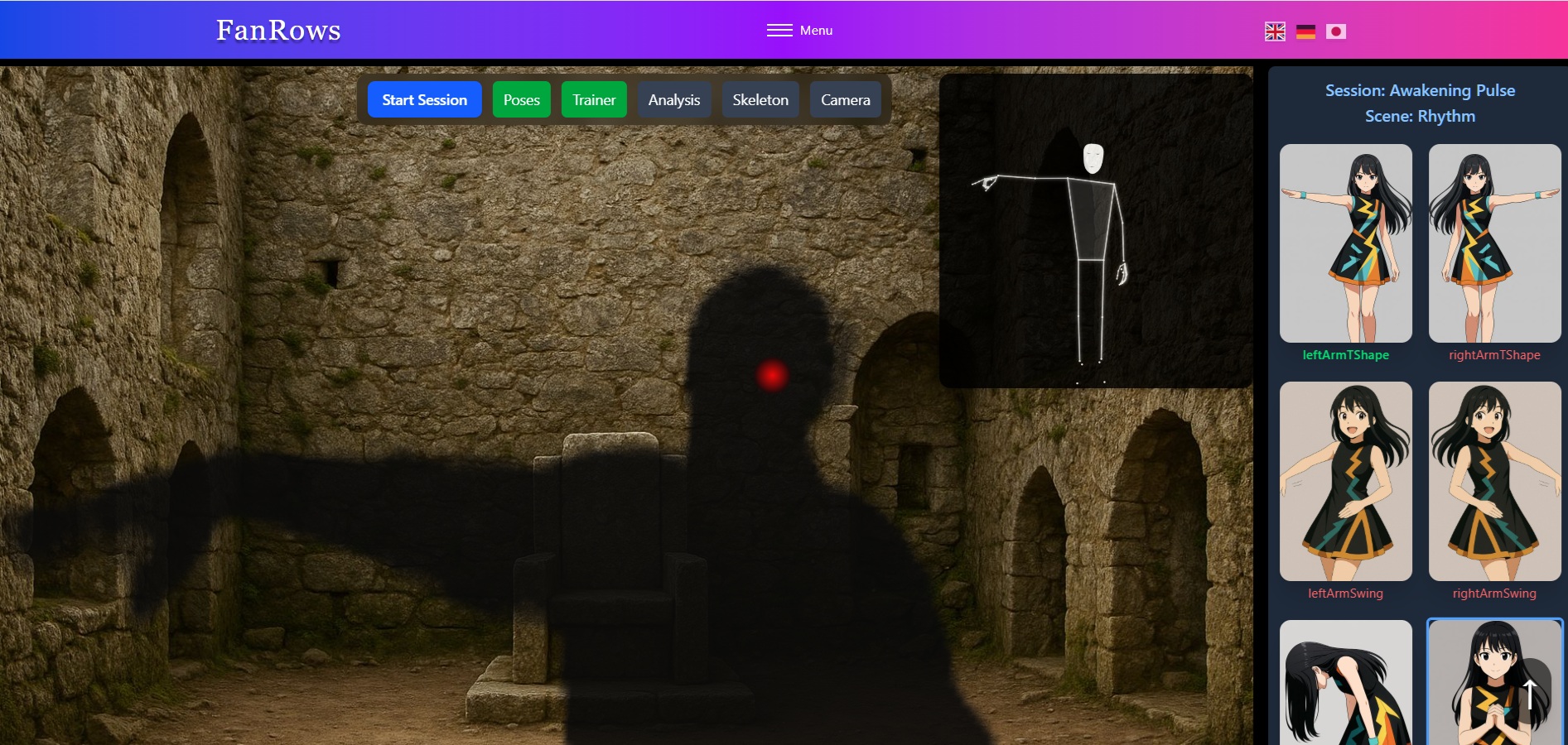

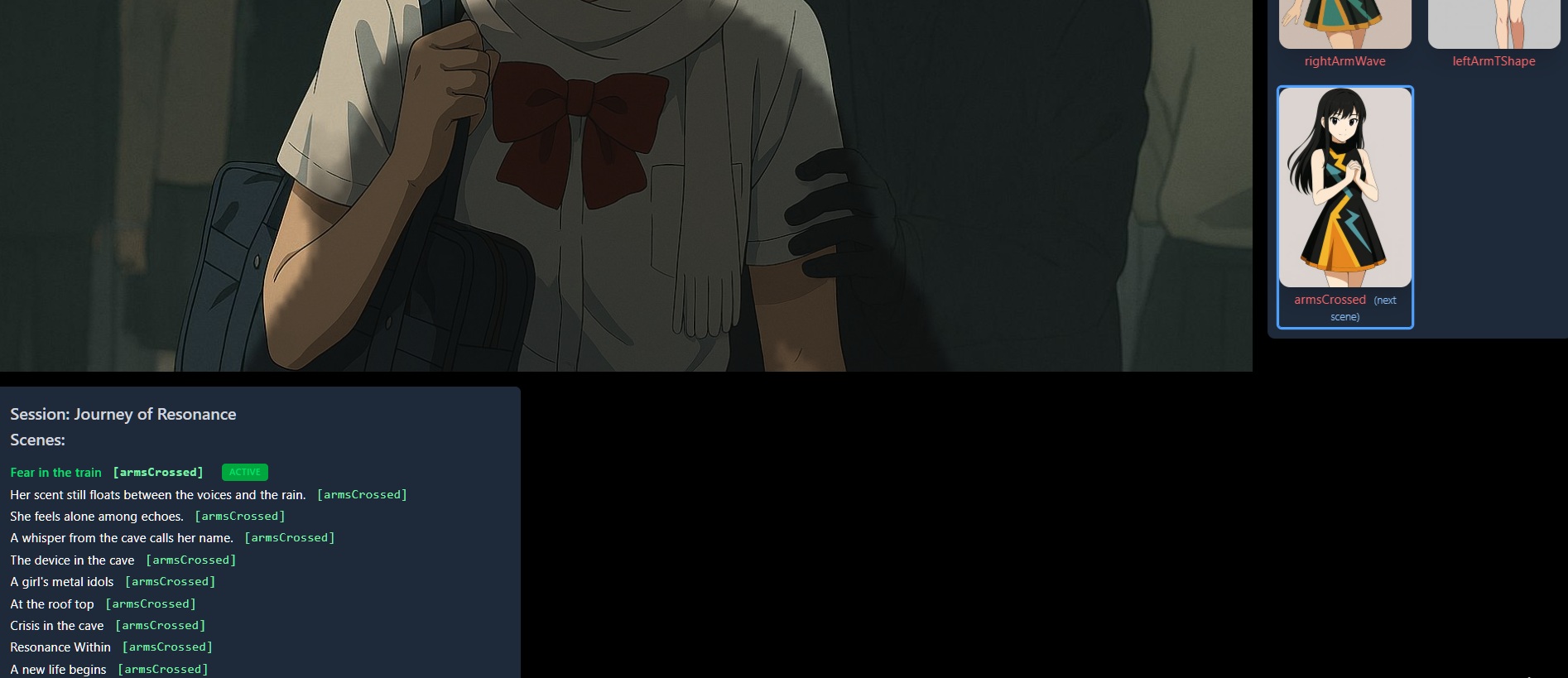

Example interaction session

This example primarily demonstrates the narrative capabilities of FanRows and the functionality of its scene management system. Scenes can structure interaction phases and guide participants through different regulatory environments. However, narrative progression is only one possible use of the system.

The same framework can also be used to construct complex sound spaces and layered sonic environments, enabling artists to design and explore evolving sound landscapes through embodied interaction.

Architecture Overview

FanRows separates motion analysis, regulation, audio control, and configuration so each layer can evolve independently while preserving a stable interaction model.

- Motion capture & feature extraction

- Regulation layer (thresholds / persistence / transitions)

- Audio engine (layered loops, gain-based activation, FX)

- Configuration layer (JSON sessions/scenes + Studio calibration)

Architecture Diagram

Legend

- Feature extraction converts landmarks into continuous motion descriptors (angles, velocity, stability, persistence).

- Regulation turns descriptors into stable states via thresholds, hold-times, and state transitions.

- Audio engine renders state as layered sound via gain-based activation and effect modulation.

- Config + Studio define and calibrate sessions/scenes for reproducible experiments.

1. Motion Capture & Feature Extraction

Input is derived from webcam-based pose tracking.

The system continuously extracts features such as:

- joint angles

- angular velocity

- movement intensity

- posture stability

- temporal persistence

These features define the regulatory input space. The resulting feature vector represents a continuous state-space description of embodied motion rather than discrete symbolic commands.

2. State & Regulation Logic

Interaction unfolds within bounded regulatory state spaces defined by threshold, persistence, and stability parameters.

Loop activation is achieved via:

- continuous gain modulation

- threshold-based persistence

- hold-time evaluation

- stability detection

State changes emerge from sustained spatial configurations rather than single gestures. This structure allows reproducible regulatory contexts without relying on timeline-based sequencing.

3. Audio Engine

The audio engine manages layered sound states.

Key characteristics:

- volume-based activation (no start/stop playback semantics)

- scene-defined loop groups

- real-time parameter modulation

- continuous cross-layer blending

- synchronized playback across layers

All audio layers are regulated through continuous gain and parameter curves rather than discrete start/stop events.

4. Configuration System

The configuration system defines how motion–sound relationships are structured and calibrated.

Sessions and scenes are defined through JSON configuration files and can be edited visually in the Studio environment.

Interaction Structure

Sessions

A session represents a concrete interaction instance under a defined configuration.

Sessions serve as structured observation environments in which:

- stabilization dynamics

- drift behavior

- regulatory patterns

can be examined over time.

A session is not a performance or composition. It is a defined system state under observation. Sessions can be logged and analyzed to examine temporal stability, transition dynamics, and emergent coherent windows.

Scenes

Scenes define bounded regulatory spaces within a session.

A scene specifies:

- active motion–sound mappings

- loop groups

- regulatory thresholds

- transition conditions

Scene transitions are triggered by sustained body configurations, not by discrete events. Scenes structure the interaction space without introducing timeline-based progression.

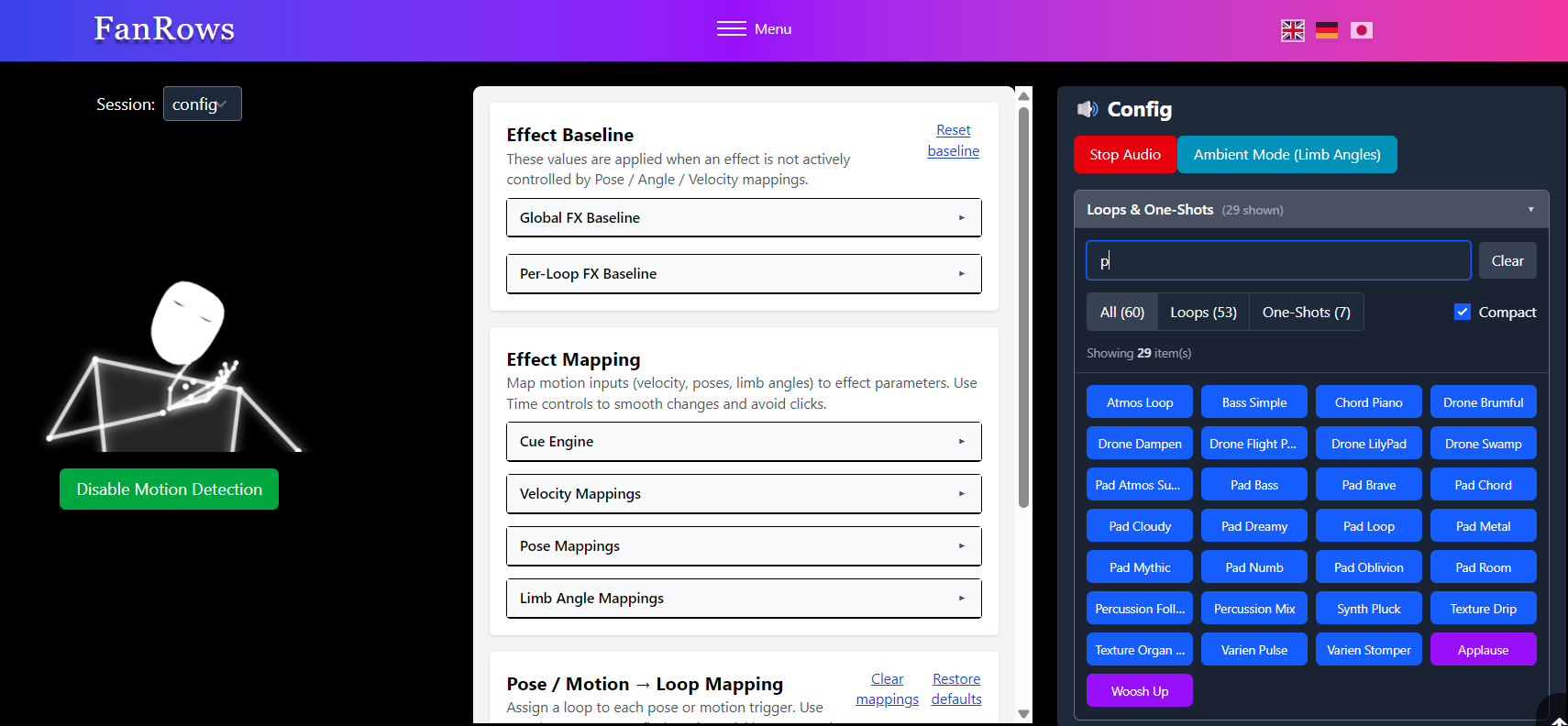

Studio (Configuration Environment)

Studio provides calibration, inspection, and structural refinement tools. It is designed to stabilize experimental conditions and expose regulatory parameters — rather than to function as a traditional music production interface. Studio allows controlled adjustment of thresholds, persistence logic, parameter mappings, and scene transitions.

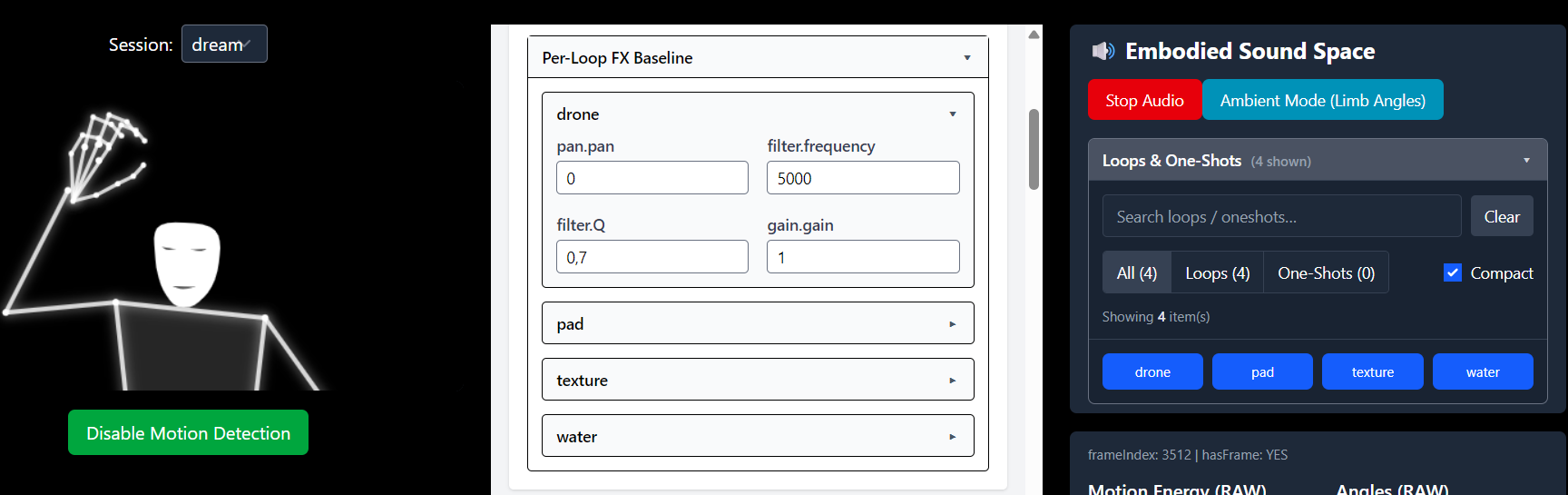

Studio Overview

The main Studio interface exposes scene configuration, loop definitions, and regulatory parameter mappings. It provides structured access to motion–sound relationships while preserving the modular system architecture.

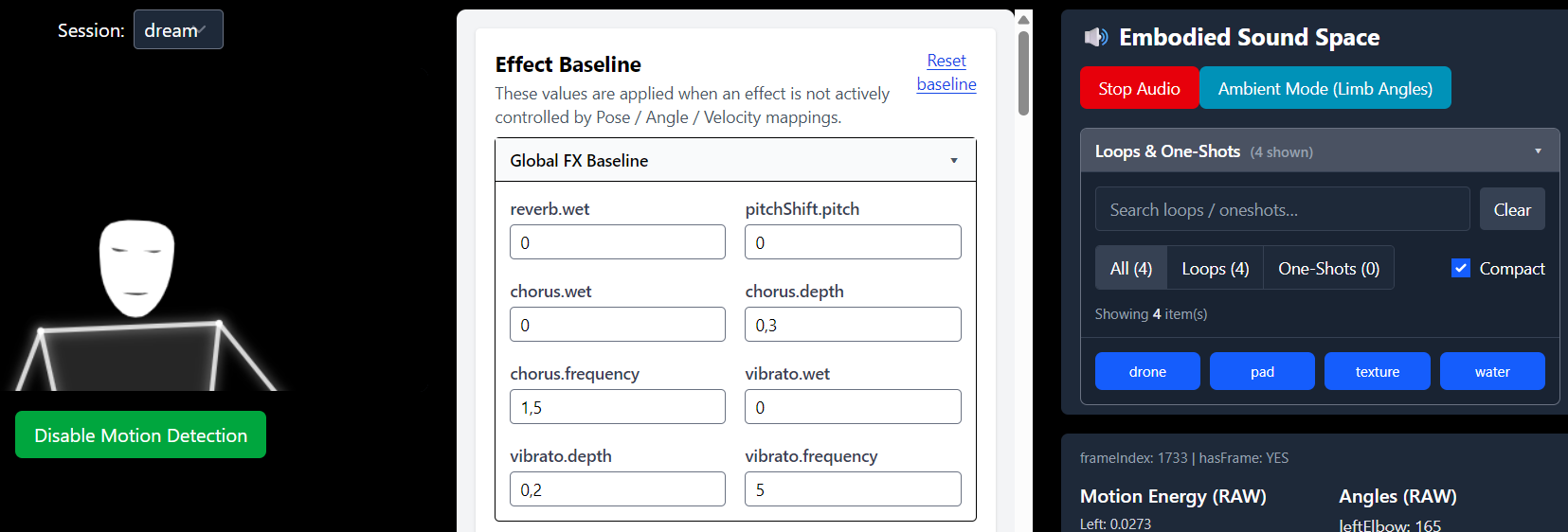

Effect Baseline

The baseline defines the default parameter state of the audio system before dynamic modulation is applied. It establishes stable conditions for scene-level and loop-level processing.

Global FX Baseline

The global baseline controls scene-wide audio parameters such as filter states, spatial characteristics, and global modulation settings. These parameters affect all active layers within the current scene.

Per-Loop FX Baseline

Per-loop baseline settings define default parameter states for individual sound layers. This allows differentiated tonal or spatial conditions within the same scene.

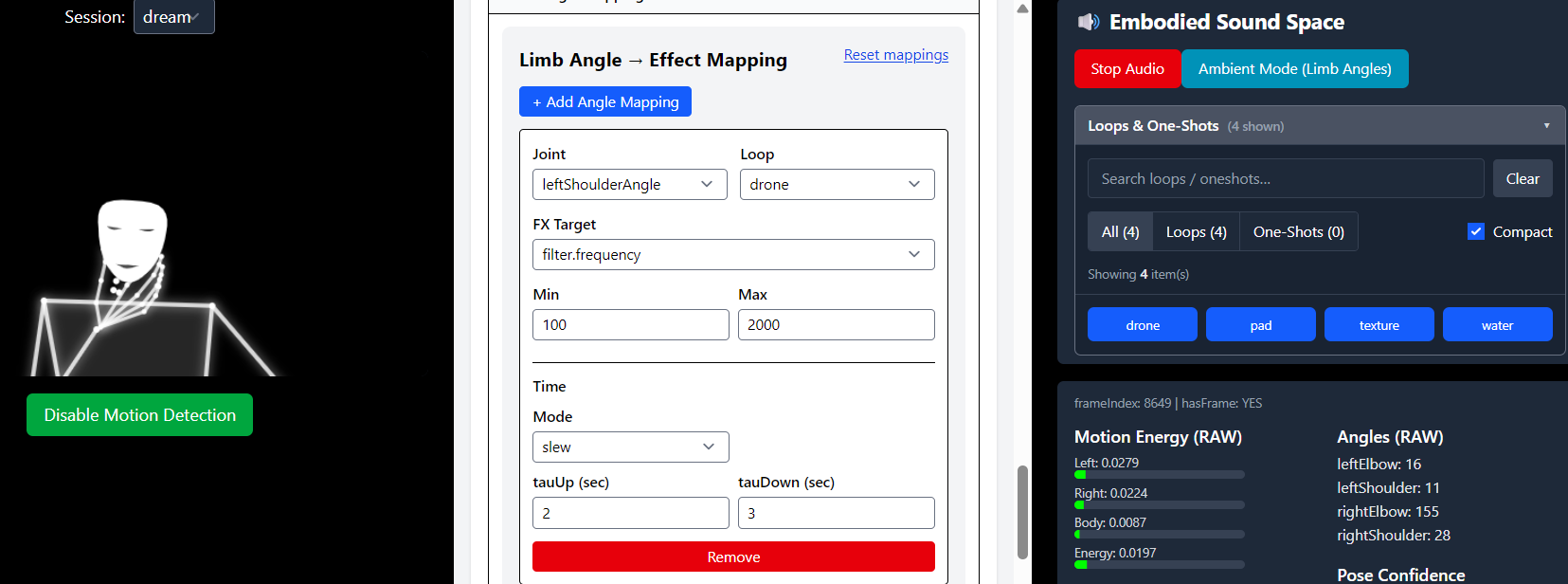

Effect Mapping

Effect mappings define how continuous motion features influence audio parameters in real time. Mappings operate as modulation layers above the established baseline.

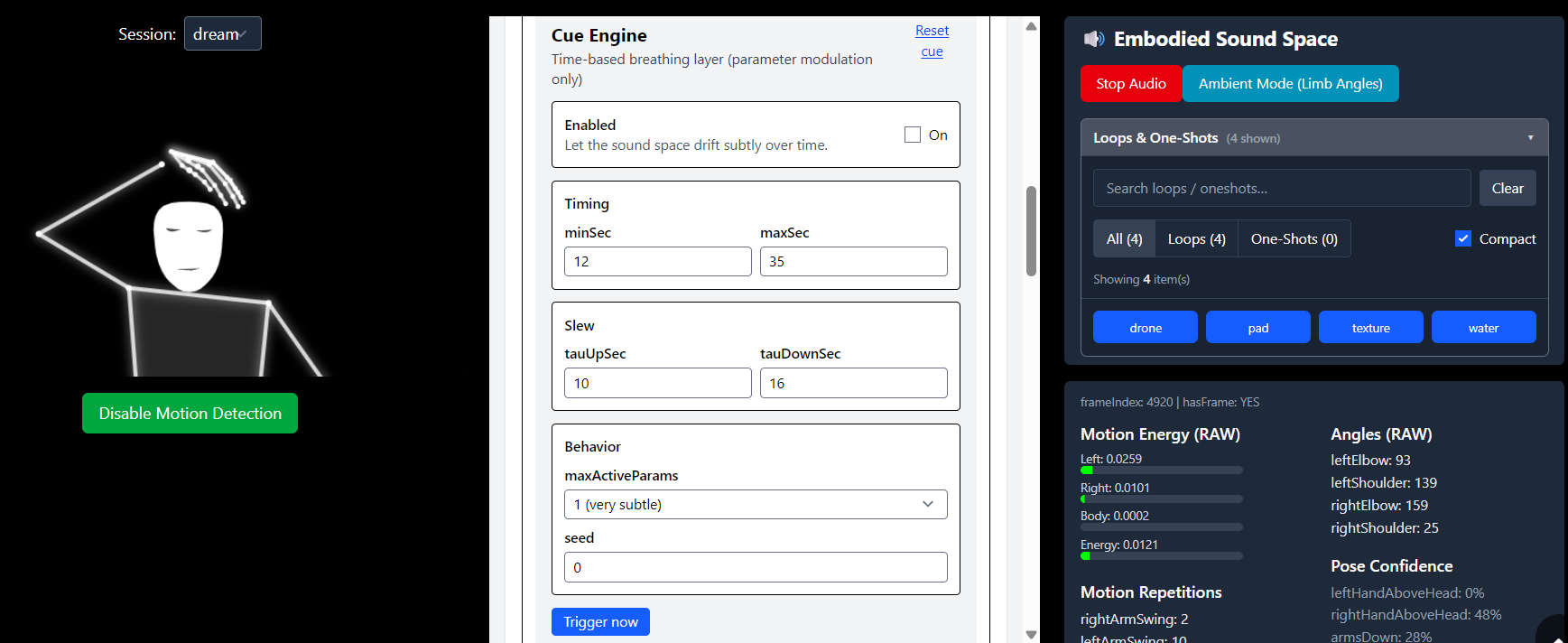

Cue Engine

The Cue Engine introduces controlled temporal variation within a scene. It modulates selected parameters over time using probabilistic activation and gradual parameter curves, without interrupting playback.

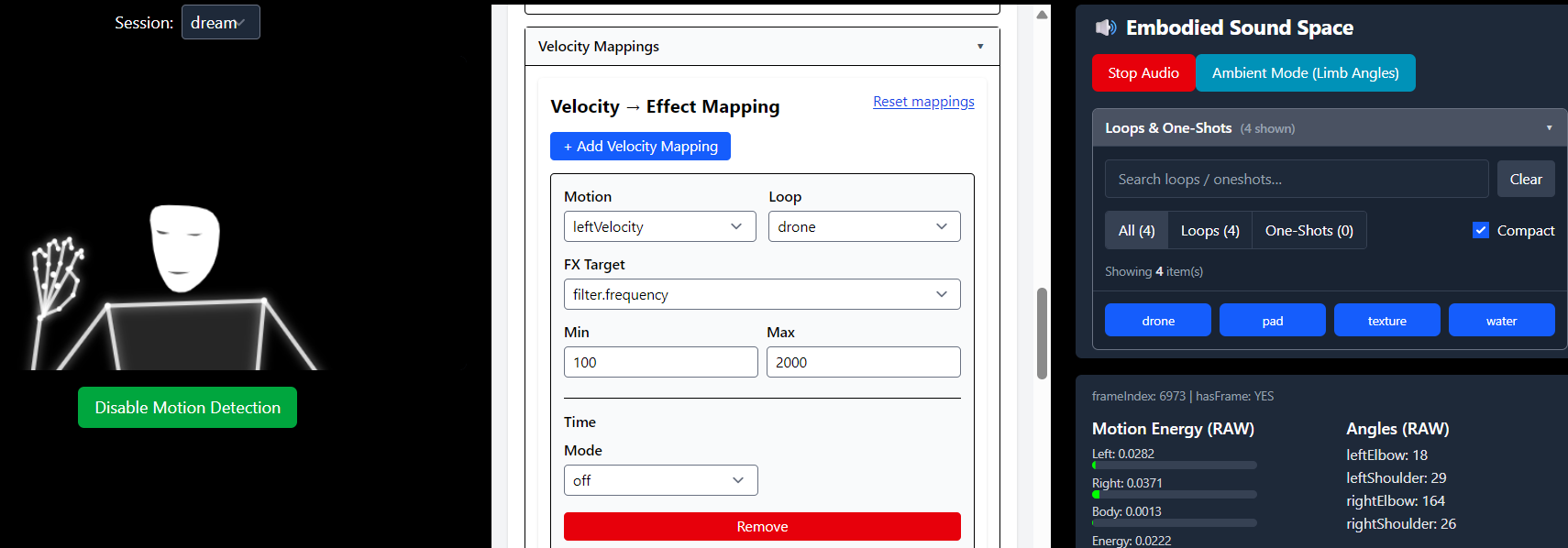

Velocity Mappings

Velocity mappings translate movement intensity into continuous parameter modulation. This enables dynamic responsiveness based on motion speed rather than discrete triggers.

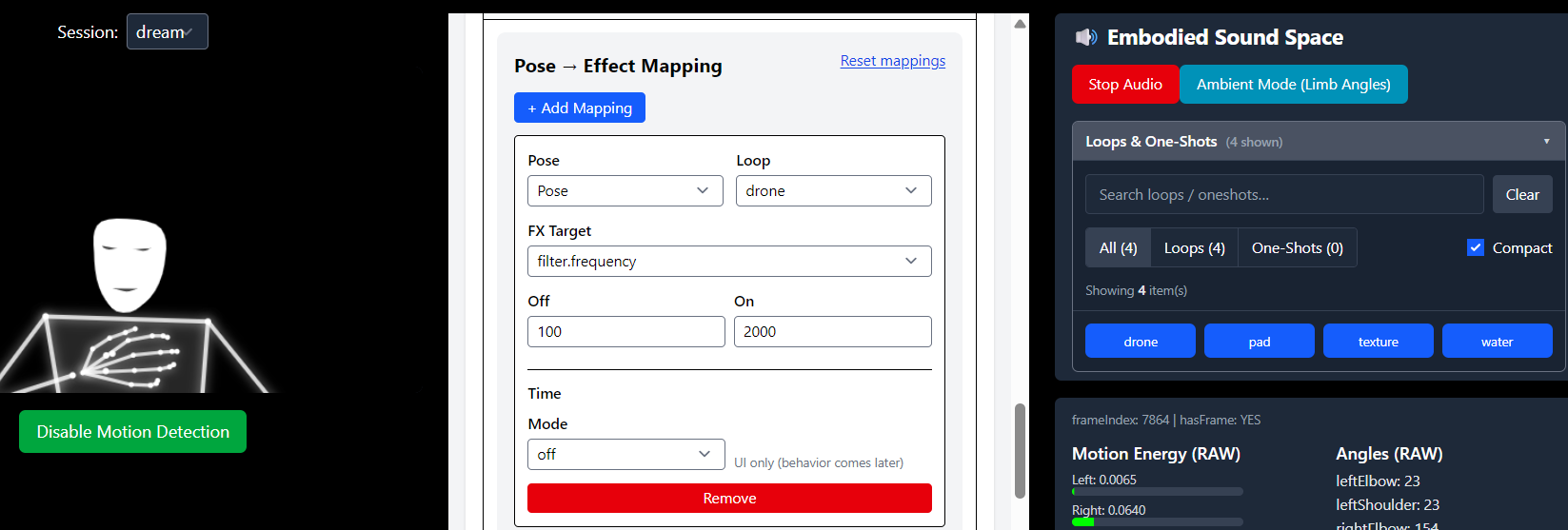

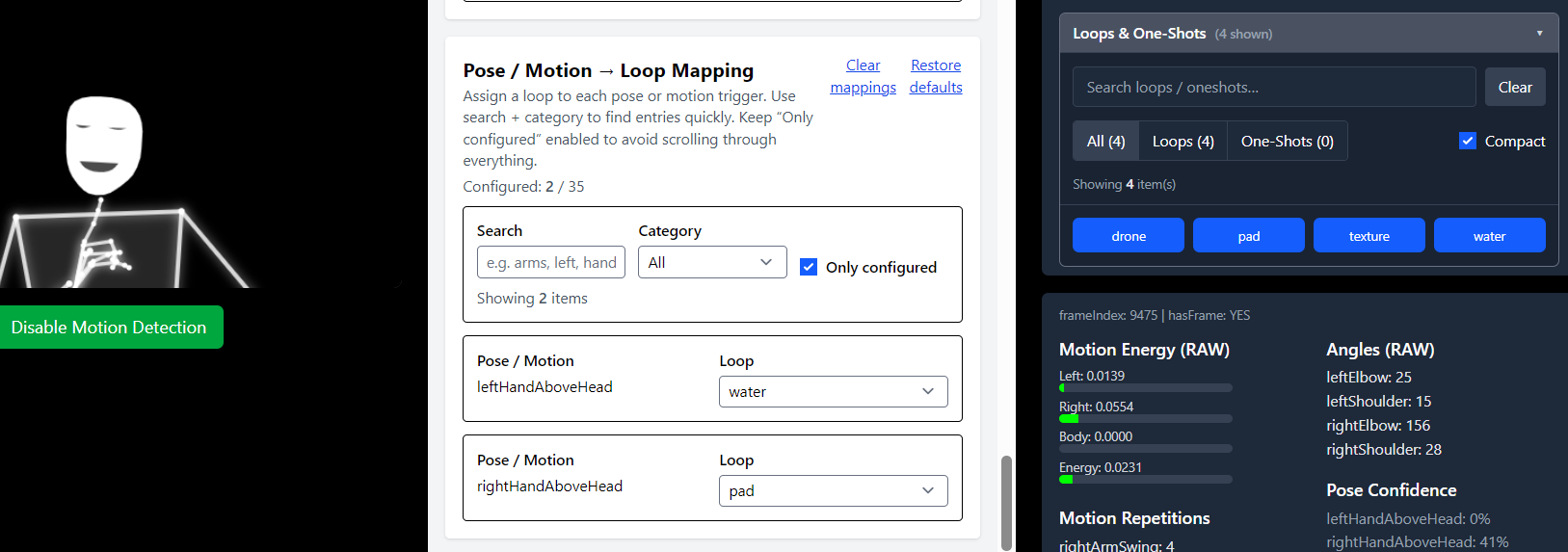

Pose Mappings

Pose mappings associate sustained spatial configurations with parameter changes or state transitions. Activation depends on temporal persistence rather than instantaneous detection.

Limb Angle Mappings

Limb angle mappings allow specific joint angle relationships to regulate audio parameters. This enables fine-grained control based on spatial articulation rather than gross movement.

Pose / Motion → Loop Mapping

This mapping layer defines which poses or motion states regulate specific loop activations. Loop behavior is governed through continuous gain modulation rather than start–stop logic.

Mapping Confidence

The confidence view displays detection stability and threshold evaluation in real time. It provides diagnostic insight into how reliably the system interprets motion and posture states during interaction.

Privacy & Performance: Local-First Architecture

FanRows is built on a "local-first" architecture. Unlike many AI-driven systems, no biometric data, video frames, or pose landmarks are transmitted to external servers.

- Client-Side Processing: All motion feature extraction (MediaPipe) and audio synthesis (Tone.js) occur entirely within the user's browser.

- Data Sovereignty: The interaction remains private and ephemeral. No personal data is stored, tracked, or analyzed outside the local session environment.

- Latency Optimization: Local execution eliminates network jitter, ensuring the high-precision regulatory loops (Slew/Inertia) operate with sub-millisecond consistency.

System Properties

FanRows integrates:

- webcam-based pose tracking

- continuous feature extraction

- gain-based audio layer regulation

- scene-defined state spaces

- reproducible session configurations via JSON

- session logging for post-hoc temporal analysis

- fully local browser execution

The architecture enables controlled modification of regulatory parameters while maintaining a stable interaction model.

FanRows — Continuous Embodied Audio Interaction

Next page Concept